Home

Mailgun Blog

Product category

Weekly Product Update: Control Panel Improvements, And 135x Faster Spell Checker For email validation

Product

Weekly product update: Control panel improvements, and 135x faster spell checker for email verification

Initial changes and improvements made to the control panel and email verification API in the early days of Mailgun. Read more...

PUBLISHED ON

This week we focused on improving the Mailgun control panel and the spelling corrector for the email verification API.

Table of contents

Domain status

Speeding up the email validator spelling corrector by 135x

What about accuracy?

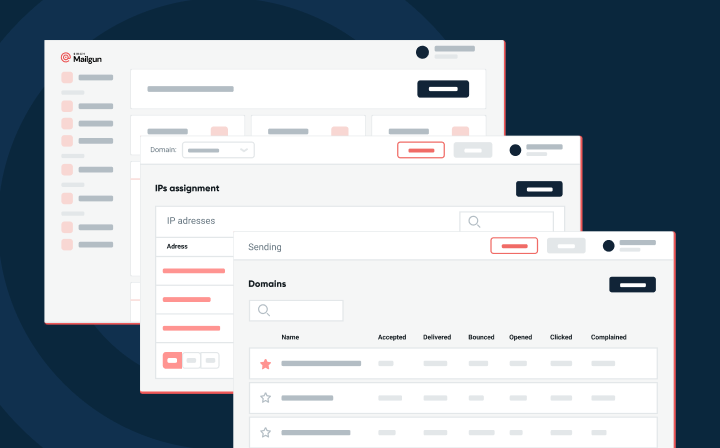

Control panel gets a facelift and some new features

You might not have noticed, but our control panel didn’t win any design awards. : ) The team here has historically had an engineering focus and been more proficient with Python and Golang than HTML5 and CSS. But we recently hired an awesome UX designer and front-end developer to help improve the experience of using Mailgun via the control panel. So, this week, we rolled out a new version of the control panel with a bunch of subtle visual enhancements and a new feature that we think you’ll find useful: domain status. This is just the first step and we will be rolling out more UX improvements and features in the future.

Domain status

Any Mailgun domain can have one of three statuses: active, unverified and disabled. Here’s what each means and why you should care:

Active- this means that your domain has all the appropriate DNS records set up and is in good standing

Unverified- this means that your domain does not have some or all of the required sending DNS records set up. This is a problem because without these records set up, your emails are most likely going to spam. You should fix this as soon as possible.

Disabled- this means that your domain is no longer able to send email, most likely due to high bounces or complaints received. Reach out to us at support@mailgun.com to resolve.

These statuses give you a quick view of all your domains, which is particularly useful if you are operating a large scale platform for customers on top of Mailgun. It will now be much easier to see which of your customers are having problems and which need your help. Domain status is also available as part of the domains API if you’d prefer to access status that way.

Speeding up the email validator spelling corrector by 135x

When we first started working on Guardpost, our email verification API, we knew typo suggestions was something we wanted to incorporate into the service (this catches typos like gmall.com instead of gmail.com). We see tons of emails not delivered to their intended destinations every day due to typos and we wanted to do something about it. We brainstormed internally, as well as researched, how other people were solving this problem; and like many others, we came across Peter Norvig’s article about how to write a spelling corrector and we built our initial spelling corrector around his idea for the first version of Guardpost we rolled out.

What we quickly found out was that our CPU was being hammered causing Guardpost to slow down and Guardpost was on a pretty beefy box. Some profiling quickly showed that we were spending a disproportionate amount of time in the spelling corrector.

Going back to the spelling corrector, the way the initial version worked was by building many different combinations of strings based off the initial word called edits, then by looking through all those edits, you look for the one with the least amount of edits from the original word (edit distance) and that’s your suggested spelling correction.

That is great for English language words, but we were not looking at English language words, we were looking at a very specific subset of English words: domains. So we started looking at different algorithms that would perform better for our specific use case of typo correction for domains. We ran lots of experiments to see what performed the best. For performance, we were looking for both speed and accuracy of the algorithm.

After a lot of research, experimentation, and tuning we ended up using a library built into Python called difflib which uses the Ratcliff-Obershelp algorithm to compute the similarity of two strings. This algorithm is not just accurate, but a very fast algorithm for correcting domains. Just how much faster? 135 times faster!

What about accuracy?

To measure accuracy, we built a testing dataset that included common typos that our DNS server saw, as well as random mutations. Random mutations are things like deleting random characters from domain, adding random characters, shortening words, and lengthening words. In total, we had about 1,000 tests.

With the overall dataset, the new corrector had an accuracy of 93.9% while the old corrector had an accuracy of 98.9%.

As you can see, these tests look like they were designed to heavily favor the original corrector, because that’s when it really shines, when you look at edits.

When you remove those tests and look only at real world typos that our DNS severs picked up, our new corrector has an accuracy of 95.8% while our original corrector had an accuracy of 96.3%! That’s less than one percent loss in accuracy on realistic typos for a 135x gain in speed. That’s not a bad trade off at all.

That’s just one of the things we’ve been working on, behind the scene, to improve Guardpost, and the experience people have with it. All of this (and more) will soon be open sourced, so you’ll be able to take a look at it (and play with it) yourself. Stay tuned!

That’s it for this week.

Happy sending!

The Mailgunners